Introduction

Data is now a daily dependency for engineering teams, product teams, and leadership. Dashboards, customer reports, alerts, ML features, and business decisions all depend on pipelines that run on time and produce correct output. However, many teams still face the same problems again and again: late refreshes, broken downstream reports, silent data quality issues, and stressful firefighting when something fails.

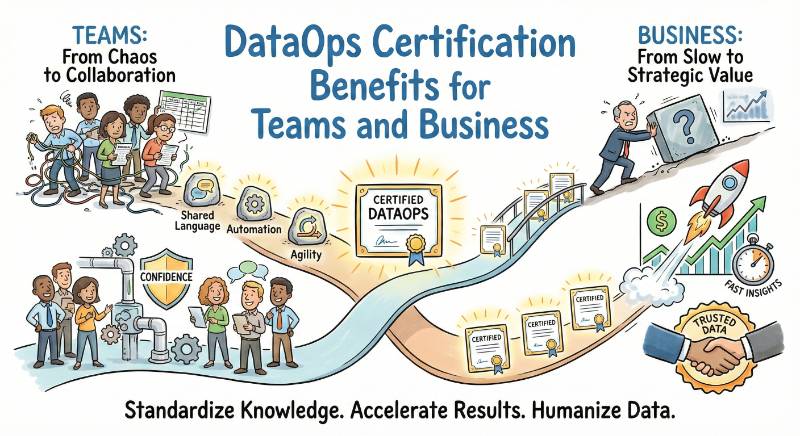

DataOps is a practical way to fix this. It applies proven software delivery habits to data work, so pipelines become repeatable, testable, observable, and easier to operate. The DataOps Certified Professional (DOCP) program is designed to help engineers and managers learn these habits in a structured way and apply them in real projects.

This guide explains what DOCP is, why it matters in real jobs, what skills you gain, how to prepare with clear timelines, and what to do next for your career. It also includes role-based certification recommendations, learning paths, and FAQs that answer the most common career questions.

What DOCP Is

DOCP, or DataOps Certified Professional, is a certification program focused on building and operating reliable data delivery systems using DataOps practices. It validates that you can move beyond “pipelines that run sometimes” and build pipelines that run consistently, recover safely, and produce trusted data.

DOCP is not only about tools. It is about professional habits:

- Designing pipelines for repeatable execution

- Adding automated checks to prevent bad data from reaching users

- Using controlled change management for data logic

- Monitoring both job health and data health

- Handling incidents with clear steps and verification

If your work touches data pipelines, analytics delivery, data platforms, or ML data flows, DOCP is a strong certification to prove you can deliver data reliably.

Why DOCP Matters in Real Jobs

In real jobs, the challenge is not building a pipeline once. The challenge is keeping it reliable when sources change, schemas evolve, new fields appear, business rules update, and deadlines stay tight. DOCP matters because it teaches you how to build a delivery system that can survive real change.

DOCP helps you reduce workplace pain such as:

- Dashboards that refresh late or show wrong numbers

- Pipelines that fail during backfills or reruns

- “Job succeeded” but the output data is still wrong

- Unclear ownership during incidents

- No consistent standards for testing and monitoring

It also increases your value as a professional because you become someone who can bring order and predictability to data delivery. Teams trust engineers who can build pipelines that are safe, observable, and easy to operate.

Who This Guide Is For

This guide is written for working professionals who want a clear, practical understanding of DOCP and how to prepare without confusion.

It is best for:

- Software Engineers moving into data engineering, platform engineering, or analytics engineering

- Data Engineers who want stronger automation, testing, and operational discipline

- DevOps and Platform Engineers supporting data platforms and pipeline reliability

- SRE-minded engineers handling SLAs, monitoring, and incident response for data systems

- Engineering Managers who want to standardize delivery and reduce firefighting

What You Will Achieve After DOCP

After DOCP preparation and hands-on practice, you should be confident delivering production-ready data pipelines with professional discipline.

You will be able to:

- Build pipelines that run daily without manual fixes

- Design idempotent workflows so reruns do not create duplicates

- Add automated data quality checks for schema, freshness, nulls, duplicates, and business rules

- Create validation steps so issues are caught before production

- Set up monitoring for job health and data health

- Handle real incidents with runbooks and verification steps

- Standardize delivery using templates, checklists, and shared patterns

- Support analytics and ML teams with reliable, predictable datasets

About the Provider

DOCP is provided by DevOpsSchool. The provider focuses on structured learning paths that connect certification preparation with job-ready outcomes.

The approach is practical: you build understanding through real workflow thinking, not only theory. That means focusing on repeatable delivery, testing discipline, monitoring habits, and operational readiness. For the official DOCP details, use the certification page you provided.

Certification Overview Table

You requested a table that lists every certification with track, level, who it’s for, prerequisites, skills covered, recommended order, and link. This guide is centered on DOCP, and only the official DOCP link is provided because you asked not to include external links.

| Certification | Track | Level | Who it’s for | Prerequisites | Skills covered | Recommended order |

|---|---|---|---|---|---|---|

| DataOps Certified Professional (DOCP) | DataOps | Professional | Data Engineers, Analytics Engineers, DevOps/Platform Engineers, Engineering Managers | SQL basics, Linux basics, basic pipeline awareness, basic cloud concepts | Orchestration, automation, data testing, observability, operational discipline, governance habits | 1 |

| DevOps Certification (related) | DevOps | Professional | Delivery and platform engineers | CI/CD basics, scripting | Release automation, delivery reliability | After DOCP |

| DevSecOps Certification (related) | DevSecOps | Professional | Security-aware delivery teams | Security basics | Secure automation, controls, governance discipline | After DOCP |

| SRE Certification (related) | SRE | Professional | Reliability-focused engineers | Monitoring basics | SLO thinking, incident response, reliability engineering | After DOCP |

| AIOps/MLOps Certification (related) | AIOps/MLOps | Professional | ML platform and operations teams | Monitoring basics, ML basics helpful | ML delivery reliability, operational automation | After DOCP |

| FinOps Certification (related) | FinOps | Professional | Cloud cost owners and managers | Cloud basics | Cost governance, optimization, accountability | After DOCP |

DataOps Certified Professional (DOCP)

What it is

DOCP validates your ability to deliver reliable data pipelines using DataOps practices. It focuses on automation, repeatability, data quality gates, monitoring, and operational readiness. The goal is trusted data delivered consistently.

Who should take it

- Data Engineers building and maintaining pipelines

- Analytics Engineers maintaining models and serving layers

- DevOps or Platform Engineers supporting data platforms

- Reliability-focused engineers owning data SLAs and freshness targets

- Engineering Managers who want standards and predictable outcomes

Skills you’ll gain

- Data pipeline design for repeatable production runs

- Orchestration patterns: dependencies, retries, timeouts, backfills

- Idempotency and safe rerun strategies

- Automated data testing: schema checks, freshness checks, business rules

- Change control: review, validation, controlled deployment habits

- Monitoring job health and output data health

- Alerting discipline and noise reduction

- Incident handling with runbooks and verification

- Basic governance habits: ownership, access awareness, audit-friendly workflows

Real-world projects you should be able to do after it

- Build a batch pipeline with automated quality checks and alerting

- Design an incremental pipeline with checkpoints and safe reruns

- Implement a backfill plan with verification before publishing

- Create a pipeline template that teams can reuse consistently

- Build a monitoring dashboard for job and data freshness health

- Write a runbook for common pipeline failures and recovery steps

- Add a controlled release process for data logic changes

Preparation plan (7–14 days / 30 days / 60 days)

A strong DOCP plan is built around practice, not only reading. Each week should include building, breaking, fixing, and verifying a pipeline. The goal is to build confidence in repeatability, quality gates, and operations.

7–14 days (fast-track for experienced engineers)

This plan works if you already run pipelines and want to structure your knowledge. You focus on DataOps concepts, testing discipline, and monitoring. The key output should be one complete end-to-end pipeline project with safe reruns, automated checks, and freshness monitoring.

30 days (balanced plan for most working professionals)

This is the most realistic plan for busy professionals. You build a foundation first, then add testing and delivery control, and finally focus on observability and incident handling. By the end, you should have one capstone pipeline and clear runbooks, checklists, and a validation workflow.

60 days (deep plan for career switch or leadership impact)

This plan is best for people switching roles or aiming to lead improvements across teams. You build multiple pipelines and add deeper operational discipline: alert hygiene, incident drills, documentation standards, governance routines, and standard templates that teams can reuse.

Common mistakes

- Treating DataOps as a tool setup instead of delivery discipline

- No clear definition of freshness or data success rules

- Pipelines are not idempotent, creating duplicates on reruns

- No automated tests, only manual dashboard checks

- Monitoring only job status, not output data health

- No alert routing and unclear ownership during incidents

- Backfills are done without verification steps

- Documentation and runbooks are missing or outdated

Best next certification after this

- If you want deeper data delivery capability, go further in data engineering and data platform specialization.

- If you want stronger production reliability, add SRE discipline and operational maturity.

- If you want stronger controls and compliance habits, add DevSecOps discipline.

How DOCP Works in Real Work

DOCP in real work means running data pipelines like production software: defined expectations, repeatable delivery, automated checks, and reliable operations.

- Define dataset expectations: users, freshness, quality rules

- Build a pipeline designed for safe reruns and backfills

- Add automated checks before publishing curated output

- Use controlled change workflows for transformation logic

- Monitor job health and output freshness continuously

- Route alerts to owners and recover using runbooks

- Standardize pipelines with templates and shared rules

Choose Your Path

DevOps

Best if you already work on CI/CD and platform automation. You apply delivery discipline to data platforms and help teams release data changes safely.

DevSecOps

Best if governance, audit readiness, and access discipline matter. You focus on controlled delivery and risk reduction without blocking delivery speed.

SRE

Best if reliability and incident response are your core responsibilities. You focus on freshness targets, alert quality, and recovery discipline.

AIOps/MLOps

Best if your pipelines support ML workflows. You focus on dataset trust, freshness, monitoring signals, and operational stability for ML data flows.

DataOps

Best if you build pipelines daily. You focus on orchestration, testing, observability, and standardized delivery patterns.

FinOps

Best if cost is a major concern in data workloads. You focus on efficiency habits, workload sizing, and cost-aware operations.

Role → Recommended Certifications Mapping

| Role | Recommended certifications (simple sequence) |

|---|---|

| DevOps Engineer | DOCP → SRE → DevSecOps |

| SRE | SRE → DOCP → AIOps/MLOps |

| Platform Engineer | DOCP → SRE → DevSecOps |

| Cloud Engineer | DOCP → FinOps → SRE (based on responsibility) |

| Security Engineer | DevSecOps → DOCP → SRE |

| Data Engineer | DOCP → deeper data specialization → SRE |

| FinOps Practitioner | FinOps → DOCP → cloud architecture basics |

| Engineering Manager | DOCP → leadership/architecture track → governance and standardization focus |

Next Certifications to Take

You asked for three options: same track, cross-track, and leadership. Below is a practical way to decide based on common certification groupings for software engineers.

Same track

Go deeper into data engineering and data platform specialization. This is best when your daily work is pipelines, transformations, and data delivery.

Cross-track

Add SRE or DevSecOps based on your job needs:

- Choose SRE if reliability, SLAs, and incidents are your biggest pain

- Choose DevSecOps if compliance, access, and controls are the biggest pain

Leadership

Choose a management or architecture-oriented track if you are responsible for standards across teams. This helps you define delivery frameworks, metrics, governance routines, and platform strategy.

Top Institutions That Provide Help in Training cum Certifications

DevOpsSchool

DevOpsSchool supports structured programs that connect certification learning with real project readiness. It is useful for professionals who want a guided plan, clear outcomes, and practical discipline around delivery and operations. It is also helpful for managers who want a structured way to standardize team practices.

Cotocus

Cotocus is useful for professionals who want a practical, implementation-focused mindset. It can help you connect learning with real workplace scenarios such as pipeline reliability and delivery improvements. It fits teams looking for applied guidance rather than only theory.

ScmGalaxy

ScmGalaxy is often associated with delivery and automation learning ecosystems. It can be helpful for building strong fundamentals in workflow discipline and repeatable practices. It fits learners who want structured training that supports hands-on understanding.

BestDevOps

BestDevOps is helpful for engineers who prefer practical learning and direct application. It can support the mindset of implementing improvements in real pipelines and platforms. It fits professionals who want to connect certification outcomes to day-to-day engineering work.

DevSecOpsSchool

DevSecOpsSchool is useful when secure delivery and governance discipline matter in your environment. It supports learning habits around safer automation, controlled changes, and reduced risk. It fits teams with compliance or audit readiness expectations.

SRESchool

SRESchool is useful if your main focus is reliability and incident reduction. It supports strong habits around monitoring, alert quality, and operational discipline. It fits engineers who want to operate systems with predictable outcomes.

AIOpsSchool

AIOpsSchool can be useful for teams managing large-scale operations and wanting better signals and automation. It supports operational intelligence habits that reduce noise and improve response. It fits teams that run many jobs and want smarter operations.

DataOpsSchool

DataOpsSchool is aligned with DataOps-first learning and practice. It is useful for building end-to-end understanding of pipeline delivery, testing, monitoring, and standardization. It fits professionals who want a direct DataOps-focused learning path.

FinOpsSchool

FinOpsSchool is useful when data workloads drive cloud spending. It supports cost awareness, accountability, and optimization habits while keeping delivery practical. It fits engineers and managers who must balance reliability and cost.

FAQs

- DOCP difficulty

It is moderate for most working professionals. If you already know SQL and have worked with pipelines, it feels practical. If you are new to pipelines, you will need more hands-on practice. - Preparation time

A 30-day plan works for most people with a job. If you already run pipelines, 7–14 days is possible. If you are switching roles, 60 days is safer. - Prerequisites

You should know SQL basics, be comfortable with Linux/command line, and understand basic data flow (ingest, transform, serve). Cloud basics help but are not mandatory. - Coding requirement

You need basic scripting and debugging skills. You do not need deep software engineering, but you should be able to read logs, trace failures, and automate simple steps. - Who should take DOCP

Data Engineers, Analytics Engineers, Platform Engineers, DevOps engineers supporting data platforms, and managers who want to standardize data delivery. - Best sequence with other certifications

If you work mainly on data pipelines, start with DOCP. After that, choose SRE for reliability, DevSecOps for controls, or FinOps for cost ownership. - Value for DevOps and SRE roles

Yes. Modern data platforms need the same reliability and automation habits as software platforms. DOCP adds strong data delivery discipline to your profile. - Projects that prove DOCP skills

A production-style pipeline with automated tests, safe reruns, freshness monitoring, alert routing, and a short runbook for failures is the strongest proof. - Career outcomes

DOCP supports roles like DataOps Engineer, Data Platform Engineer, Analytics Engineer, Data Engineer with stronger ops focus, and reliability roles supporting data systems. - Salary impact

It helps most when you can show real impact such as fewer pipeline failures, faster releases, better trust, and reduced incident time. - Manager usefulness

DOCP helps managers define standards, set ownership, reduce firefighting, and improve delivery reliability across teams. - Most common preparation mistake

People read theory but do not build a real pipeline project. The value comes from hands-on work with tests, monitoring, and incident handling.

FAQs on DataOps Certified Professional (DOCP)

- What DOCP validates in real terms

It validates that you can deliver pipelines as a production system with testing, monitoring, safe reruns, and reliable operations. - The fastest way to build DOCP confidence

Build one end-to-end pipeline with automated checks, safe reruns, freshness monitoring, and a clear verification step before publishing. - The biggest mindset shift in DOCP

Treat data like software: version it, review it, test it, deploy it safely, and observe it continuously. - The best capstone project for DOCP

A pipeline that ingests raw data, transforms it into curated tables, runs quality checks, deploys safely, and monitors freshness and anomalies. - How to handle backfills the DOCP way

Design idempotent loads, use partitions, verify output quality, and publish only after checks pass. - How to reduce noisy alerts

Alert only on conditions that require action, set thresholds, route alerts to the correct owner, and remove alerts that never lead to fixes. - What a good pipeline runbook should include

Symptoms, quick checks, common causes, recovery steps, verification steps, and a short communication note. - What to do right after passing DOCP

Pick one direction based on your job needs: deeper data specialization, stronger reliability through SRE, stronger controls through DevSecOps, or leadership focus for standardization.

Conclusion

DOCP is valuable because it trains you to deliver data with repeatability, quality discipline, and operational readiness. Instead of relying on manual checks and last-minute fixes, you learn to build pipelines that can run daily with predictable results and safe recovery options.

If you follow a structured preparation plan and complete at least one end-to-end project with automated quality gates and monitoring, you will gain skills that directly match real job expectations. After DOCP, choose your next certification direction based on your role: go deeper in data delivery, strengthen reliability with SRE, add controls with DevSecOps, or move toward leadership by standardizing practices across teams.